Differential item functioning (DIF) with intersectional groups or interaction effects requires lots more statistical comparisons than traditional groupings do. In large-scale, high-stakes testing programs, we usually look at

- race/ethnicity (with around five levels),

- sex/gender (two levels),

- special education status (two levels),

- advantage status (two or three), and

- language status (two levels).

A grouping variable with $k = 5$ levels produces $k – 1$ DIF comparisons per item (each group level compared with the reference level), so the traditional approach might involve $4 + 1 + 1 + 1 + 1 = 9$ total comparisons per item. An intersectional approach, in contrast, would involve as many as $5 \times 2 \times 2 \times 2 \times 2 – 1 = 79$ (all intersectional levels compared with a single reference intersectional level).

The increase in comparisons leads to an increase in Type I error (DIF by chance). We can adjust for false positives but a simple workaround is to use generalized and omnibus tests in place of repeated pairwise comparisons. Penfield (2001) demonstrated the generalized Mantel-Haenszel and Magis et al. (2011) demonstrated a generalized logistic regression method. Neither used interactions between groups, but the methods still apply. In both studies, the generalized methods tended to be better (more powerful), and a practical benefit is that they’re simpler to implement, with only one test run per item. Finch (2016) compared a few generalized methods.

See this post from 2022 for some background on DIF with intersectional or interaction effects.

Generalized methods also make it easier to reconsider our reference level. The standard approach in DIF is to refer all comparisons to the historically advantaged test takers, so, White, English-speaking, male, etc. My coauthors and I (Albano et al., 2024) gave some recommendations on this point.

The convention of using the historically advantaged test takers as the reference group should also be reconsidered. Because DIF is usually structured as a relative comparison, results will not change except in their signs if reference and focal groups are switched. However, moving away from the conventional reference groups will help to decenter Male and White in discussions of test performance. Alternatives include centering more diverse groups, using models that evaluate DIF effects within groups relative to their own means (we have not seen this method used before) or using models that compare effects against an aggregate of all groups (e.g., Austin & French, 2020).

Omnibus testing comes from ANOVA, where we analyze a set of effects together as a whole before investigating specific comparisons. In the context of DIF, we might not even bother with the post hoc comparisons – an omnibus DIF flag would disqualify an item regardless of the source(s) of variation.

Here’s a simple demonstration with 9 groups, 400 people per group, 20 items, one item having DIF of 0.6 logits for three of the groups, and group abilities normally distributed with means ranging from -1 to 1 logits.

# Load tidyverse

library("tidyverse")

# We also need the epmr package from github

# devtools::install_github("talbano/epmr")

# Generating parameters

set.seed(260120)

ni <- 20

ng <- 9

np <- 400

# 2PL item parameters

ip <- data.frame(a = exp(rnorm(ni, 0, 0.1225)), b = rnorm(ni), c = .2) |>

list() |> rep(ng)

# Induce DIF of 0.6 logits on item 1 for groups 2, 6, and 9

ip[[2]]$b[1] <- ip[[6]]$b[1] <- ip[[9]]$b[1] <- ip[[2]]$b[1] + .6

# Theta with range of means by group

theta <- lapply(seq(-1, 1, length = ng), function(m) rnorm(np, m, 1))

# Generate scores by group

scores <- lapply(1:ng, function(g) epmr::irtsim(ip[[g]], theta[[g]]))

scores <- data.frame(rep(paste0("g", 1:ng), each = np),

do.call("rbind", scores)) |> setNames(c("group", paste0("i", 1:ni)))

DIF is estimated for the first two items with dichotomous Mantel-Haenszel (MH) and logistic regression (LR), and then with generalized methods, using functions from the epmr package.

# Dichotomous DIF, MH and LR

# Eight comparisons per item with g5 as reference group

focal <- paste0("g", 1:ng)[-5]

out_mh <- out_lr <- vector("list", length = ng - 1) |> setNames(focal)

for (g in focal) {

index <- scores$group %in% c("g5", g)

temp_scores <- scores[index, -1]

temp_group <- scores$group[index]

out_mh[[g]] <- epmr::difstudy(temp_scores, temp_group, ref = "g5",

method = "mh", dif_items = 1:2, anchor_items = 3:ni)$uniform

out_lr[[g]] <- epmr::difstudy(temp_scores, temp_group, ref = "g5",

method = "lr", dif_items = 1:2, anchor_items = 3:ni)$uniform

}

# GMH and GLR DIF

out_gmh <- epmr::difstudy(scores[, -1], scores$group, ref = "g5",

method = "mh", dif_items = 1:2, anchor_items = 3:ni)$uniform

out_glr <- epmr::difstudy(scores[, -1], scores$group, ref = "g5",

method = "lr", dif_items = 1:2, anchor_items = 3:ni)$uniform

The generalized methods flagged item 1 (GMH p < .001, GLR p < .001) and item 2 (GMH p = .004, GLR p = .004) for DIF. The next table shows the results for all of the dichotomous comparisons. Even with an unadjusted p-value of .05, MH and LR don’t flag any significant DIF on items 1 or 2 because the thresholds for practical significance (delta for MH and r2d change in R-squared) aren’t met.

| item | group | delta | mh_p | ets_lev | lr_p | r2d | zum_lev |

|---|---|---|---|---|---|---|---|

| i1 | g1 | -0.864 | 0.034 | a | 0.023 | 0.008 | a |

| i1 | g2 | -1.276 | 0.001 | b | 0.001 | 0.019 | a |

| i1 | g3 | -0.321 | 0.445 | a | 0.508 | 0.001 | a |

| i1 | g4 | -0.167 | 0.714 | a | 0.665 | 0.000 | a |

| i1 | g6 | -1.110 | 0.004 | b | 0.001 | 0.016 | a |

| i1 | g7 | 0.535 | 0.216 | a | 0.138 | 0.004 | a |

| i1 | g8 | 0.582 | 0.203 | a | 0.156 | 0.003 | a |

| i1 | g9 | -0.068 | 0.946 | a | 0.975 | 0.000 | a |

| i2 | g1 | -0.245 | 0.594 | a | 0.598 | 0.000 | a |

| i2 | g2 | -0.268 | 0.523 | a | 0.525 | 0.001 | a |

| i2 | g3 | 0.290 | 0.492 | a | 0.567 | 0.001 | a |

| i2 | g4 | 0.246 | 0.553 | a | 0.380 | 0.001 | a |

| i2 | g6 | 1.007 | 0.010 | b | 0.006 | 0.013 | a |

| i2 | g7 | 0.810 | 0.044 | a | 0.066 | 0.006 | a |

| i2 | g8 | 0.883 | 0.038 | a | 0.041 | 0.007 | a |

| i2 | g9 | 0.935 | 0.036 | a | 0.036 | 0.007 | a |

We can also conduct omnibus DIF tests under an item response theory framework. I mentioned this in my earlier post on DIF with interaction effects. Using the lme4 package, a simple and direct approach is to estimate random effects for item, person, and group, and then an interaction between each DIF item to be tested and group. Here’s some code.

# Stack the data for lme4 scores_long <- scores |> tibble() |> mutate(person = row_number(), .before = 1) |> pivot_longer(cols = i1:i20, names_to = "item", values_to = "score") |> mutate(i1 = ifelse(item == "i1", 1, 0), i2 = ifelse(item == "i2", 1, 0)) # Fit the models # r2, with DIF estimated for items 1 and 2, is singular r0 <- lme4::glmer(score ~ 0 + (1 | item) + (1 | person) + (1 | group), family = binomial, data = scores_long, control = lme4::glmerControl(optimizer = "bobyqa")) r1 <- lme4::glmer(score ~ 0 + (1 | item) + (1 | person) + (1 + i1 | group), family = binomial, data = scores_long, control = lme4::glmerControl(optimizer = "bobyqa")) r2 <- lme4::glmer(score ~ 0 + (1 | item) + (1 | person) + (1 + i1 + i2 | group), family = binomial, data = scores_long, control = lme4::glmerControl(optimizer = "bobyqa")) # Compare r0 and r1 to test for DIF anova(r0, r1)

Here’s a subset of the ANOVA table output comparing fit for models with and without the DIF interaction term for item 1. After the table is the lme4 output for model r1. Person, item, and group have logit standard deviations of 0.71, 0.57, and 0.64 respectively, and performance on item 1 varies over groups with standard deviation 0.22.

| npar | AIC | logLik | -2log(L) | Chisq | Pr>Chisq | |

|---|---|---|---|---|---|---|

| r0 | 3 | 86588 | -43291 | 86582 | NA | NA |

| r1 | 5 | 86577 | -43284 | 86567 | 14 | 0.0007 |

Generalized linear mixed model fit by maximum likelihood (Laplace

Approximation) [glmerMod]

Family: binomial ( logit )

Formula: score ~ 0 + (1 | item) + (1 | person) + (1 + i1 | group)

Data: scores_long

AIC BIC logLik -2*log(L) df.resid

86577.40 86623.32 -43283.70 86567.40 71995

Random effects:

Groups Name Std.Dev. Corr

person (Intercept) 0.7070

item (Intercept) 0.5737

group (Intercept) 0.6444

i1 0.2165 0.33

Number of obs: 72000, groups: person, 3600; item, 20; group, 9

No fixed effect coefficients

Finally, we can conduct omnibus DIF analysis using the mirt R package. The first step is to estimate a multi-group Rasch model, then a separate mirt function takes the multi-group output and runs models with and without the grouping variable interacting with each DIF item. The table below shows the output, where AIC and chi-square (X2) indicate DIF for item 1, and no fit indices indicate DIF for item 2.

mirt_mg <- mirt::multipleGroup(scores[, -1], model = 1,

itemtype = "Rasch", group = scores[, 1],

invariance = c(paste0("i", 3:20), "free_means", "free_variances"))

mirt_dif <- mirt::DIF(mirt_mg, "d", items2test = c("i1", "i2"))

| AIC | SABIC | HQ | BIC | X2 | df | p | |

|---|---|---|---|---|---|---|---|

| i1 | -17.52 | 6.57 | 0.13 | 31.99 | 33.52 | 8 | 0.00 |

| i2 | 7.79 | 31.88 | 25.43 | 57.30 | 8.21 | 8 | 0.41 |

References

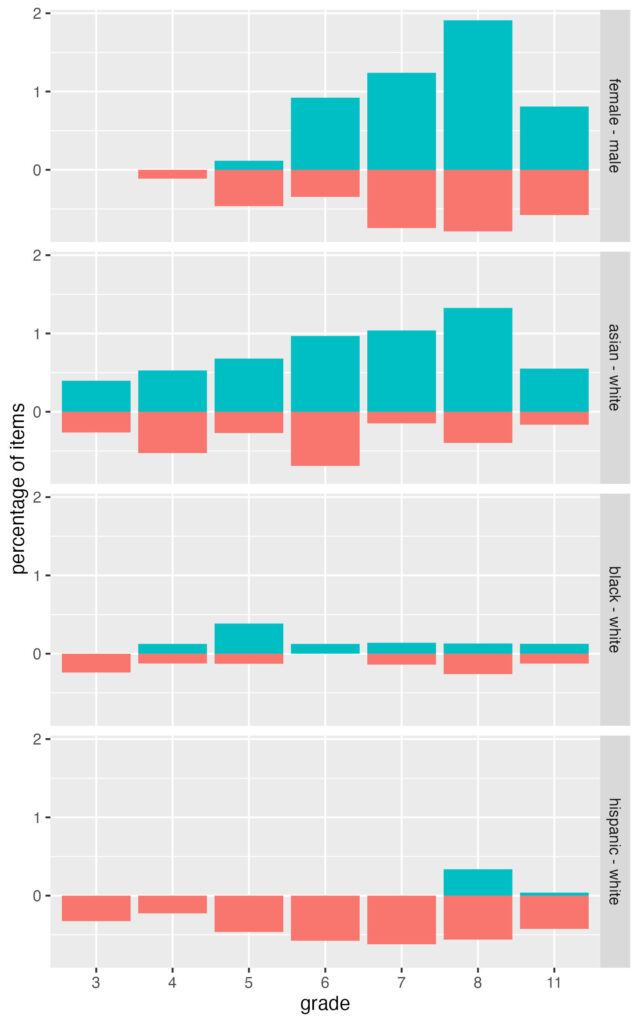

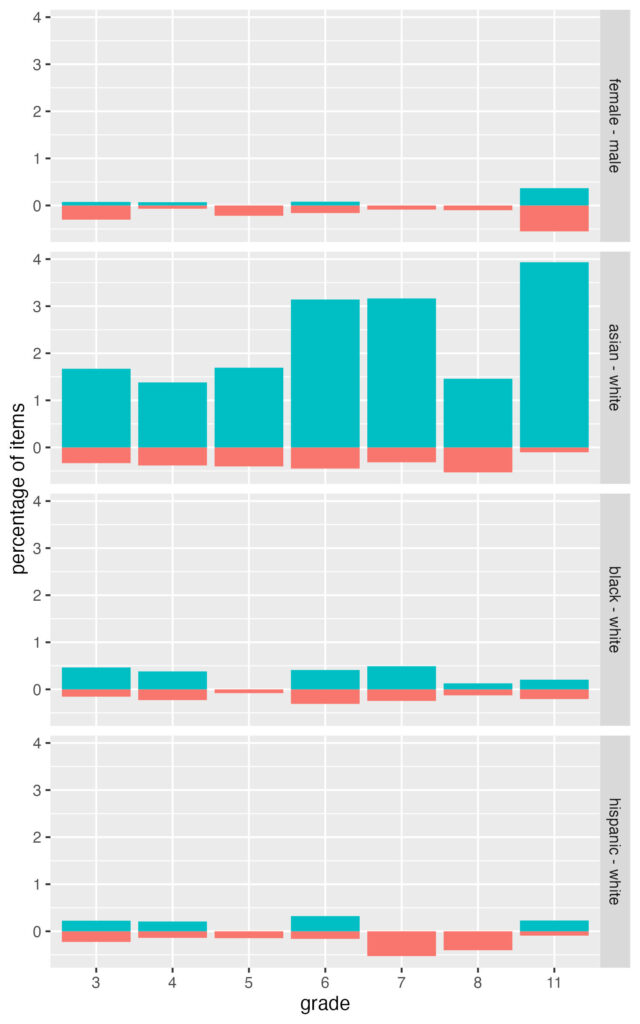

Albano, T., French, B. F., & Vo, T. T. (2024). Traditional vs intersectional DIF analysis: Considerations and a comparison using state testing data. Applied Measurement in Education, 37(1), 57-70.

Austin, B. W., & French, B. F. (2020). Adjusting group intercept and slope bias in predictive equations. Methodology, 16(3), 241–257.

Finch, W. H. (2016). Detection of differential item functioning for more than two groups: A Monte Carlo comparison of methods. Applied measurement in Education, 29(1), 30-45.

Magis, D., Raîche, G., Béland, S., & Gérard, P. (2011). A generalized logistic regression procedure to detect differential item functioning among multiple groups. International Journal of Testing, 11(4), 365-386.

Penfield, R. D. (2001). Assessing differential item functioning among multiple groups: A comparison of three Mantel-Haenszel procedures. Applied Measurement in Education, 14(3), 235-259.