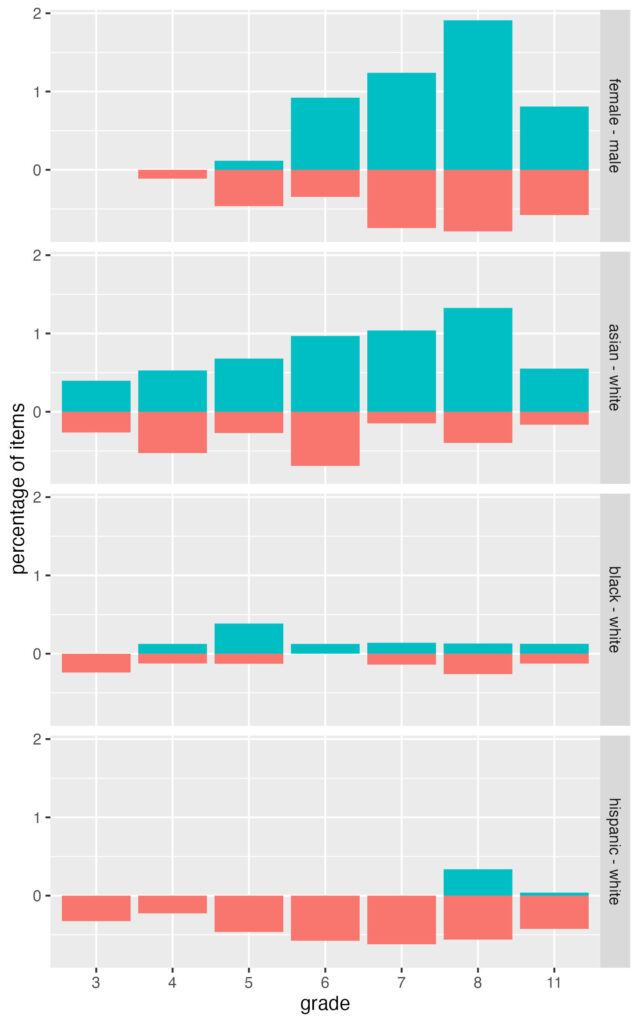

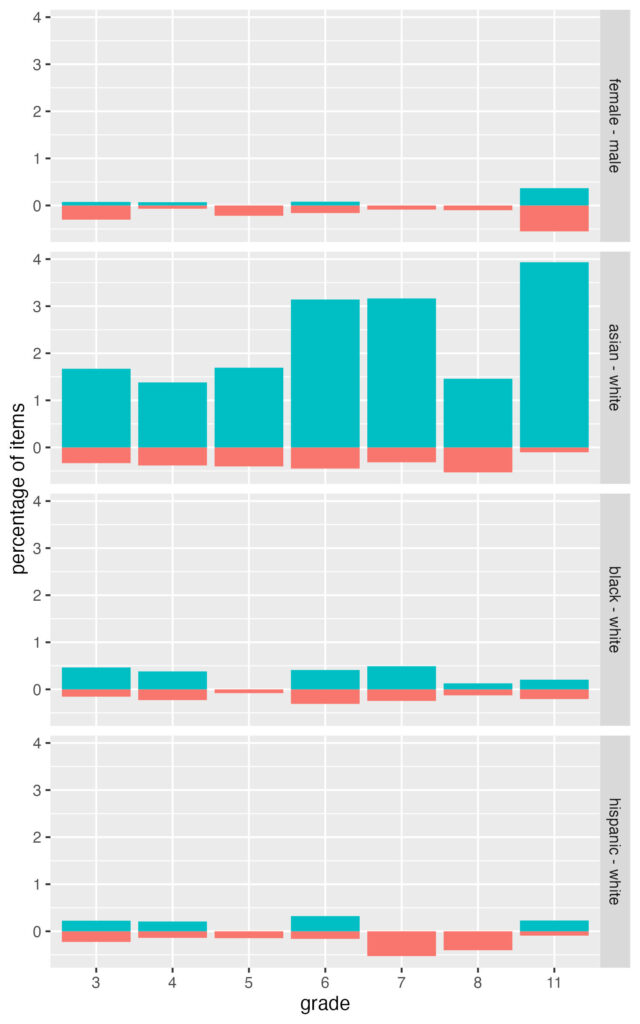

A couple of papers came out last year that consider intersectionality in differential item functioning (DIF) analysis. Russell and Kaplan (2021) introduced the idea, and demonstrated it with data from a state testing program. Then, Russell, Szendey, and Kaplan (2021) replicated the first study with more data. This is a neat application of DIF, and I’m surprised it hasn’t been explored until now. I’m sure we’ll see a flurry of papers on it in the next few years.

Side note, the second Russell study, published in Educational Assessment, doesn’t seem justified as a separate publication. They use the same DIF method as in the first paper, they appear to use the same data source, and they have similar findings. They also don’t address in the second study any of the limitations of the original study (e.g., they still use a single DIF method, don’t account for Type I error increase, don’t have access to item content, don’t have access to pilot vs operational items). The second study really just has more data.

Why is the intersectional approach neat? Because it can give us a more accurate understanding of potential item bias, to the extent that it captures a more realistic representation of the test taker experience.

The intersectional approach to DIF is a simple extension of the traditional approach, one that accounts for interactions among grouping variables. We can think of the traditional approach as focusing on main effects for distinct variables like gender (female compared with male) and race (Black compared with White). The intersectional approach simply interacts the grouping variables to examine the effects of membership in intersecting groups (e.g., Black female compared with White male).

Interaction DIF models

I like to organize DIF problems using explanatory item response theory (Rasch) models. In the base model, which assumes no DIF, the log-odds $\eta_{ij}$ of correct response on item $i$ for person $j$ can be expressed as a linear function of overall mean performance $\gamma_0$ plus mean performance on the item $\beta_{i}$ and the person $\theta_j$:

$$\eta_{ij} = \gamma_0 + \beta_i + \theta_j,$$

with $\beta$ estimated as a fixed effect and $\theta \sim \mathcal{N}(\gamma_0, \, \sigma^{2})$. $\gamma_0 + \beta_i$ captures item difficulty, with higher values indicating easier items.

Before we formulate DIF, we estimate a shift in mean performance by group:

$$\eta_{ij} = \gamma_0 + \gamma_{1}group_j + \beta_i + \theta_j.$$

In a simple dichotomous comparison, we can use indicator coding in $group$, where the reference group is coded as 0 and the focal group as 1. Then, $\gamma_0$ estimates the mean performance for the reference group and $\gamma_1$ is the impact or disparity for the focal group expressed as a difference from $\gamma_0$. To estimate DIF, we interact group with item:

$$\eta_{ij} = \gamma_0 + \gamma_{1}group_j + \beta_{0i} + \beta_{1i}group_j + \theta_j.$$

Now, $\beta_{0i}$ is the item difficulty estimate for the reference group and $\beta_{1i}$ is the DIF effect, expressed as a difference in performance on item $i$ for the focal group, controlling for $\theta$.

The previous equation captures the traditional DIF approach. Separate models would be estimated, for example, with gender in one model and then race/ethnicity in another. The interaction effect DIF approach consolidates terms into a single model with multiple grouping variables. Here, we replace $group$ with $f_j$ for female and $b_j$ for Black:

$$\eta_{ij} = \gamma_0 + \gamma_{1}f_j + \gamma_{2}b_j + \gamma_{3}f_{j}b_j + \beta_{0i} + \beta_{1i}f_j + \beta_{2i}b_j + \beta_{3i}f_{j}b_j + \theta_j.$$

With multiple grouping variables, again using indicator coding, $\gamma_0$ estimates the mean performance for the reference group White male and $\gamma_{1}$, $\gamma_{2}$, and $\gamma_3$ are the deviations in mean performance for White women, Black men, and Black women, respectively, from the reference group. The $\beta$ terms are interpreted similarly but in reference to performance on item $i$, with $\beta_1$, $\beta_2$, and $\beta_3$ as DIF effects.

R code

Here’s what the above models look like when translated to lme4 (Bates et al, 2015) notation in R.

# lme4 code for running interaction effect DIF via explanatory Rasch

# modeling, via generalized linear mixed model

# family specifies the binomial/logit link function

# data_long would contain scores in a long/tall/stacked format

# with one row per person per item response

# item, person, f, and b are then separate columns in data_long

# Base model

glmer(score ~ 1 + item + (1 | person),

family = "binomial", data = data_long)

# Gender DIF with main effects

glmer(score ~ 1 + f + item + f:item + (1 | person),

family = "binomial", data = data_long)

# Race/ethnicity DIF with main effects

glmer(score ~ 1 + b + item + b:item + (1 | person),

family = "binomial", data = data_long)

# Gender and race/ethnicity DIF with interaction effects

glmer(score ~ 1 + f + b + item + f:b + f:item + b:item + f:b:item + (1 | person),

family = "binomial", data = data_long)

# Shortcut for writing out the same formula as the previous model

# This notation will automatically create all main effects and

# 2x and 3x interactions

glmer(score ~ 1 + f * b * item + (1 | person),

family = "binomial", data = data_long)

In my experience, modeling fixed effects for items like this is challenging in lme4 (slow, with convergence issues). Random effects for items would simplify things, but we would have to adopt a different theoretical perspective, where we’re less interested in specific items and more interested in DIF effects, and the intersectional experience, overall.

Here’s what the code looks like with random effects for items and persons. In place of DIF effects, this will produce variances for each DIF term, which tell us how variable the DIF effects are across items by group.

# Gender and race/ethnicity DIF with interaction effects

# Random effects for items and persons

glmer(score ~ 1 + f + b + f:b + (1 + f + b + f:b | item) + (1 | person),

family = "binomial", data = data_long)

# Alternatively

glmer(score ~ 1 + f * b + (1 + f * b | item) + (1 | person),

family = "binomial", data = data_long)

While lme4 provides a flexible framework for explanatory Rasch modeling (Doran et al, 2007), DIF analysis gets complicated when we consider anchoring, which I’ve ignored in the equations and code above. In practice, ideally, our IRT model would include a subset of items where we are confident that DIF is negligible. These items anchor our scale and provide a reference point for comparing performance on the potentially problematic items.

The mirt R package (Chalmers, 2012) has a lot of nice features for conducting DIF analysis via IRT. Here’s how we get at main effects and interaction effects DIF using mirt:::multipleGroup and mirt:::DIF. The former runs the model and the latter reruns it, testing the significance of the multi group extension by item.

# mirt code for interaction effect DIF

# Estimate the multi group Rasch model

# Here, data_wide is a data frame containing scored item responses in

# columns, one per item

# group_var is a vector of main effect or interacting group values,

# one per person (e.g., "fh" and "mw" for female-hispanic and male-white)

# anchor_items is a vector of item names, matching columns in data_wide,

# for the items that are not expected to vary by group, these will

# anchor the scale prior to DIF analysis

# See the mirt help files for more info

mirt_mg_out <- multipleGroup(data_wide, model = 1, itemtype = "Rasch",

group = group_var,

invariance = c(anchor_items, "free_means", "free_variances"))

# Run likelihood ratio DIF analysis

# For each item, the original model is fit with and without the

# grouping variable specified as an interaction with item

# Output will then specify whether inclusion of the grouping variable

# improved model fit per item

# items2test identifies the columns for DIF analysis

# Apparently, items2test has to be a numeric index, I can't get a vector

# of item names to work, so these would be the non-anchor columns in

# data_wide

mirt_dif_out <- DIF(mirt_mg_out, "d", items2test = dif_items)

One downside to the current setup of mirt:::multipleGroup and mirt:::DIF is there isn’t an easy way to iterate through separate focal groups. The code above will test the effects of the grouping variable all at once. So, we’d have to run this separately for each dichotomous comparison (e.g., subsetting the data to Hispanic female vs White male, then Black female vs White male, etc) if we want tests by focal group.

Of course, interaction effects DIF can also be analyzed outside of IRT (e.g., with the Mantel-Haenszel method). It simply involves more comparisons per item than with the main effect approach where we consider each grouping variable separately. For example, gender (with two levels, female, male) and race (with three levels, Black, Hispanic, White) gives us 3 comparisons per item with main effects, whereas we have 5 comparisons per item with interaction effects.

After writing up all this example code, I’m realizing it would be much more useful if I demonstrated it with output. I try to round up some data and share results in a future post.

References

Bates, Douglas, Martin Mächler, Ben Bolker, and Steve Walker. 2015. Fitting Linear Mixed-Effects Models Using lme4. Journal of Statistical Software 67 (1): 1–48.

Chalmers, R. P. (2012). mirt: A multidimensional item response theory package for the R environment. Journal of Statistical Software, 48, 1–29.

Doran, H., D. Bates, P. Bliese, and M. Dowling. 2007. Estimating the Multilevel Rasch Model: With the lme4 Package. Journal of Statistical Software 20 (2): 1–18.

Russell, M., & Kaplan, L. (2021). An intersectional approach to differential item functioning: Reflecting configurations of inequality. Practical Assessment, Research & Evaluation, 26(21), 1–17.

Russell, M., Szendey, O., & Kaplan, L. (2021). An intersectional approach to DIF: Do initial findings hold across tests? Educational Assessment, 26, 284–298.